It can be a result of memory updates from external processors. Also called invalidation, this cache miss occurs because of data access to invalid cache lines. Conflict cache misses occur when a cache goes through different cache mapping techniques, from fully-associative to set-associative, then to the direct-mapped cache environment. It’s also known as a collision or interference cache miss. Common causes include fully-occupied cache blocks and the program working set being larger than the cache size. This type of cache miss occurs if the cache cannot contain all the required data for executing a program. Misses are inevitable as the caches don’t have any data in their blocks yet. Also called a cold start or first reference cache miss, a compulsory miss occurs as site owners activate the caching system.

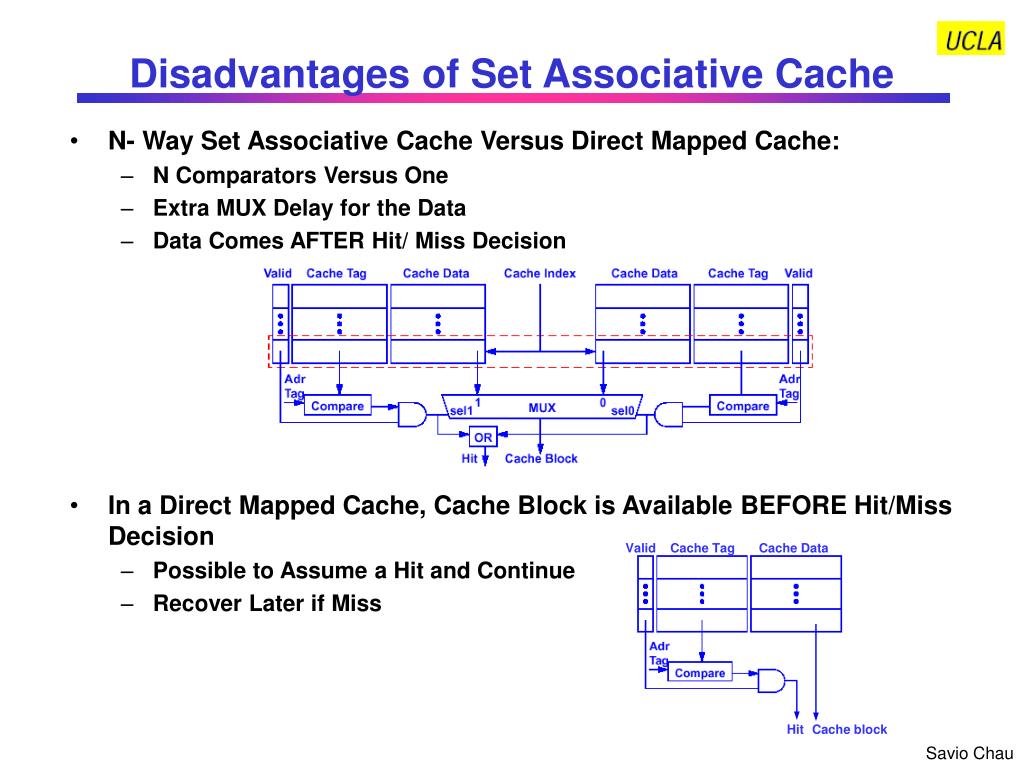

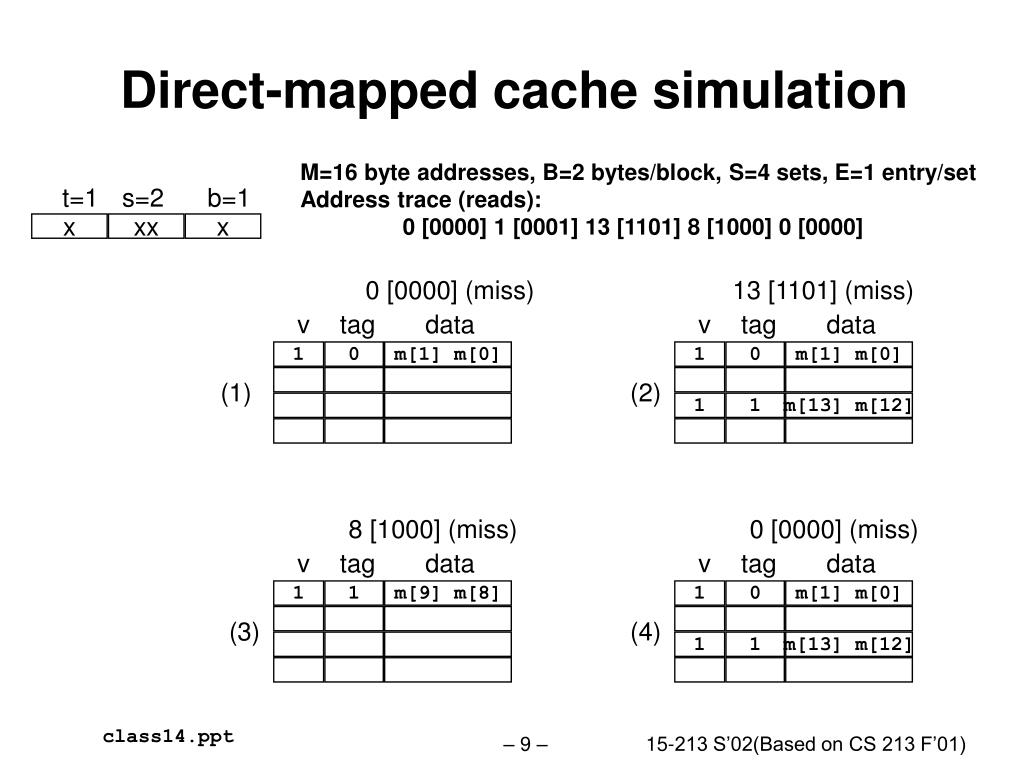

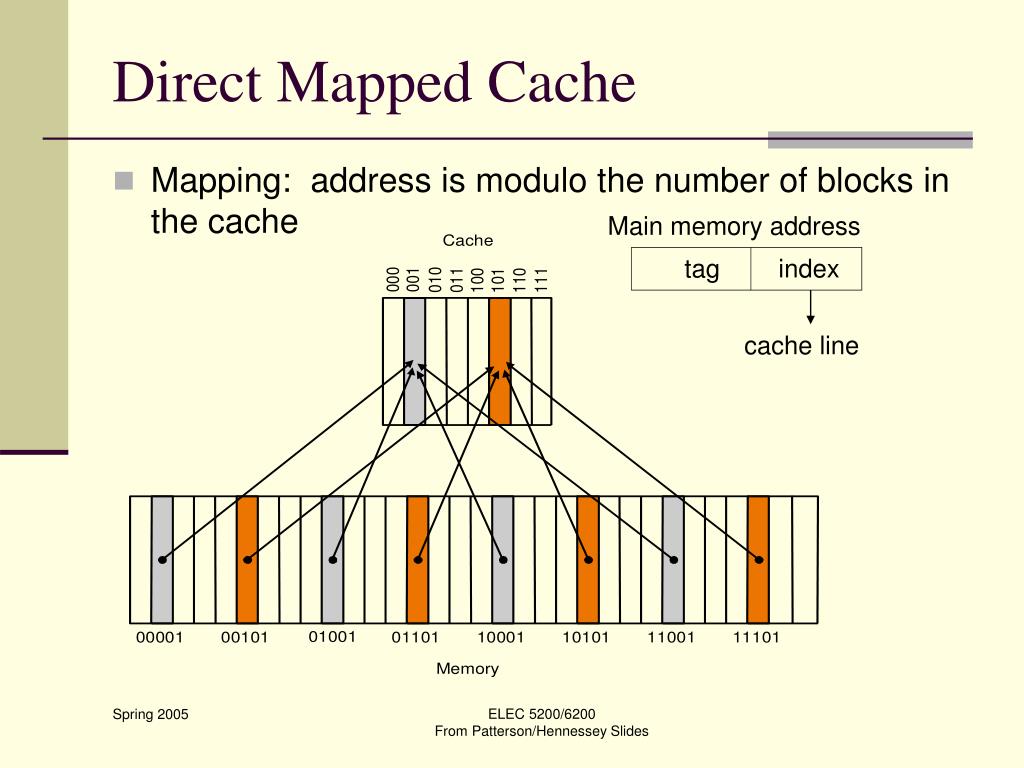

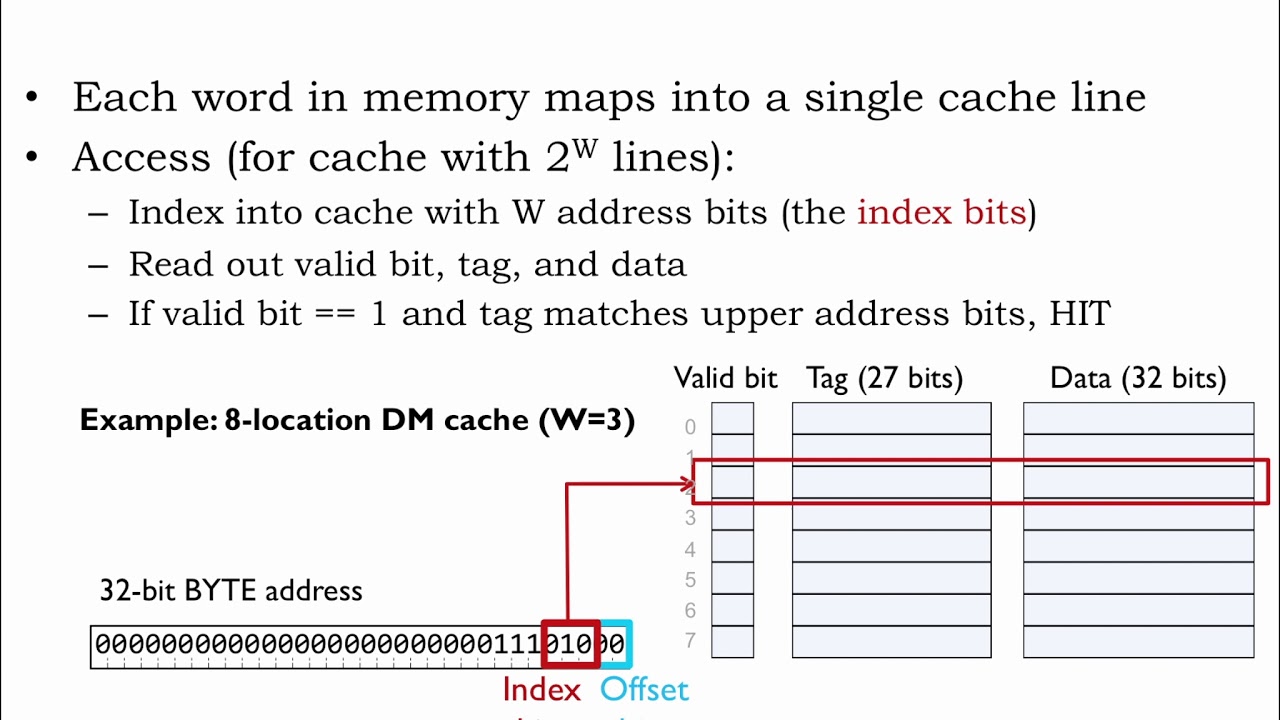

Let’s look at four types of cache misses: This results in an increased cache miss rate, especially if the system needs to look into the main database to fetch the requested data. The more cache levels a system needs to check, the more time it takes to complete a request. In other words, if a cache can’t find the requested data in the L1 cache, it will then search for it in L2. When a cache miss occurs, the caching system needs to search further into the CPU memory units to find the stored information. Often called Last-Level Cache (LLC) or the main database, 元 is the largest and slowest cache memory unit. An L2 cache has one designated memory in each core of the CPU. It takes longer to access than L1 but is faster than 元. The secondary cache is more extensive than L1 but smaller than 元. L1 accommodates recently-accessed content and has designated memory units in each core of the CPU. Despite being the smallest in terms of capacity, the primary cache is the easiest to access. Here’s the common memory hierarchy found in a cache: Typically, the memory hierarchy consists of three levels, which affect how fast data can be successfully retrieved. It indicates the extra time a cache spends to fetch data from its memory. Cache Miss PenaltiesĪ cache miss penalty refers to the delay caused by a cache miss. To help ensure successful caching, website owners can track their miss penalties and hit ratio. Set-associative caches arrange cache lines into sets, resulting in increased hits. It combines fully-associative cache and direct-mapped cache techniques. This technique lets any block of the main memory go to any cache line available at the moment. It’s the simplest technique, as it maps each memory block into a particular cache line.

Generally, here are three cache mapping techniques to choose from: The cache will take over the query and process the request. Hence, every time they revisit the website, their devices don’t need to redownload the site content.

For example, website cache will automatically store the static version of the site content the first time users visit a web page.

SRAM has a lower access time, making it the perfect mechanism for improving performance. The latter serves as a dynamic random access memory (DRAM), whereas a cache is a form of static random access memory (SRAM). Cache Miss Penalties and Cache Hit RatioĬaching enables computer systems, including websites, web apps, and mobile apps, to store file copies in a temporary location, called a cache.Ī cache sits close to the central processing unit and the main memory. Cache misses can slow down computer performance, as the system must wait for the slower data retrieval process to complete. If the data is not found in cache memory, the application must retrieve the data from a slower, secondary storage location, which results in a cache miss. When an application needs to access data, it first checks its cache memory to see if the data is already stored there. A cache miss occurs when a computer or application attempts to access data that is not stored in its cache memory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed